RTFM¶

The open retrieval layer your AI coding agent was missing.

RTFM indexes your entire project — source code, documentation, legal text, research papers, structured data — into one local SQLite knowledge base, and serves surgical context to your AI agent over the Model Context Protocol. Works with Claude Code, Cursor, Codex, Claude Desktop, and any other MCP client.

It is open source (MIT), runs entirely locally (no cloud, no API keys, no telemetry), and extends to any file format in ~50 lines of Python or ~30 lines of YAML.

-

Quick start

Install and index your first project in two commands.

-

Two levels of integration

Use 15 built-in parsers, or extend with declarative JSON schema mappings — no code required.

-

Multi-domain

Indexes code (Python AST), docs (Markdown headers), legal (XML), research (LaTeX), tabular (CSV/XLSX), notebooks (Jupyter), databases (SQLite).

-

Privacy by default

One SQLite file in

.rtfm/. No external services. Open it withsqlite3if you don't trust me.

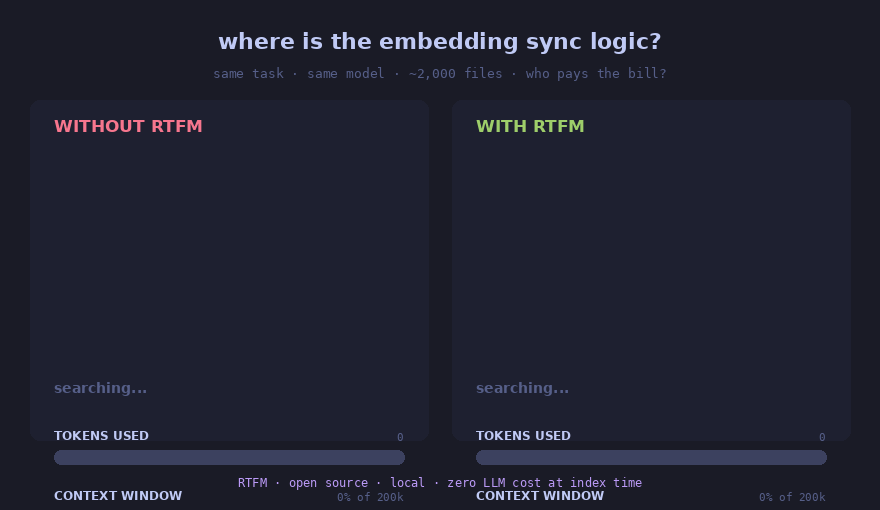

Why RTFM¶

AI coding agents are blind without retrieval. They grep through thousands of files, lose context every session, hallucinate modules that don't exist. The bottleneck isn't reasoning — it's localization: finding the right files before writing code.

On a document-heavy task (French tax article generation, ~50 pages of sourced regulatory text):

| Metric | Without RTFM | With RTFM | Δ |

|---|---|---|---|

| Token cost | $22.61 | $11.14 | −51% |

| Duration | 8m16s | 6m58s | −16% |

| Tokens used | 8.21M | 3.22M | −61% |

Quick start¶

The fastest path is the Claude Code plugin — zero pip required:

Then in your project:

That creates .rtfm/library.db, indexes the project, and registers RTFM

as an MCP server. Ask Claude Code anything that needs to find files; it

will search RTFM instead of running grep blindly.

For Cursor / Codex / Claude Desktop / other MCP clients, install via pip:

What RTFM is not¶

- Not a vector database. Default search is FTS5; embeddings are optional and local (FastEmbed/ONNX, no GPU required).

- Not a hosted service. Everything runs on your machine. No accounts, no API keys, no quotas.

- Not a competing AI agent. It's the retrieval layer underneath your agent of choice.

- Not code-only. Code indexers (Augment, Sourcegraph) ignore your PDFs, your LaTeX, your YAML configs. RTFM indexes everything.

Where to next¶

-

Why RTFM — How RTFM compares to Augment, Sourcegraph, vector RAG, Karpathy's LLM Wiki pattern.

-

Architecture — Internal layout, parser registry, sync, edges, embeddings.

-

Built-in parsers — All 15 formats with parsing strategies and chunk semantics.

-

JSON schema mappings — Map any JSON schema to typed chunks with declarative YAML.

-

NotebookLM integration — Pair RTFM with

notebooklm-mcpto break the 50 queries/day cap. -

:material-vault: Obsidian vault mode — Karpathy's LLM Wiki, but searchable past 500 notes.

-

RTFM vs vector RAG — When FTS5 beats embeddings, and when both belong together.

-

Changelog — Release notes since v0.1.0.